Ben Feist, a visualization data software engineer, works on making data more presentable and easier to understand. He first applied this visualizing technique to space missions on the last lunar landing mission, Apollo 17. He spent 100s of hours of volunteer time in restoring and presenting all the sound and images from that mission. What followed was a more 21st century digital timeline that allowed anyone to relive the mission in real time. He now works for NASA, and Apollo 13 is his latest data visualization for them.

The 50th anniversary of the suspenseful Apollo 13 mission is fast approaching. Astronauts Jim Lovell, Fred Haise and last-minute addition, Jack Swigert were its crew. On April 11, 1970, at 13:13 Central Standard Time, Apollo 13 blasted off from Launch Complex-39A on top of the mighty Saturn V rocket. It was to be the third lunar landing mission. However, about 56 hours into the mission Houston instructed the crew to “stir the cryo tanks.” A large explosion in the Service Module soon followed, and near deadly chaos ensued.

Restoration Project

Despite many of us not being alive when the Apollo 13 mission happened, there is one way to relive it: apolloinrealtime.org. Ben Feist created this site. Recently, I had the wonderful opportunity to interview him over the phone. I asked him about what inspired him, why he worked in the field he does, and what he does as a software engineer.

Over the past two years, he also helped in the audio restoration for the spectacular Apollo 11 documentary, which premiered on January 29, 2019. In this interview we talked about the Apollo missions and how he did the audio restoration for apolloinrealtime.org and Apollo 11.

Interview

So, how could you, the average person, experience it to the fullest 50 years later? Well, Feist started apolloinrealtime.org after he spent six years of his life filtering through and digitizing all the Apollo 17 air-to-ground audio.

“[Apollo] 17 was way worse,” Ben said, when asked how it differed from restoring audio from Apollo missions 11 and 13. “It’s great to be able to play with material and to experience it, it’s very enriching, and a very fun thing to do, not a job,” Feist said about his views on the audio. You can find detailed descriptions of that process on benfeist.com.

Why was this difficult?

Restoring this audio and turning it into a digital file format has been no easy feat; luckily Ben described it to me. The National Archive houses tens of thousands of hours of audio from all the Apollo missions. NASA archived the audio on one-inch wide, open-reel tapes that contain 16 hours each. Each tape contains 30 tracks of audio. “It was another computer science problem that I didn’t know I was gonna need to solve and [I] figured out how to do it!” Ben said, before going into detail.

The Last Machine in the World

“The machine introduced a speed variation into all of the recordings. And just because it was old, and the motor wasn’t balanced anymore… there was all of this buzzing and speed variation in the recording,” Ben explained. Only one machine exists in the world that can still replay these tapes, and it lives at Johnson Space Center (JSC), in Houston, Texas.

Speed Variation Issues

Because of this speed variation, the audio output sounded a bit off: “These speed variations are called in the recording industry, I’ve learned, terms called ‘wow’ and ‘flutter’.” The term “wow” refers to longer period variations in the speed. It got its name because it makes a “wow” like sound. The term “flutter” refers to much faster variations in the speed, which can often sound like an opera singer holding a note. You can find an example on YouTube.

Many people have attempted to solve this problem previously. However, a challenge like this, with 30 audio tracks, requires a multifaceted approach. Hence, Ben said that he began reaching out to people who might solve this issue. Soon, he connected with a Biology graduate student, and he helped solve the issue.

But how did he solve this issue exactly?

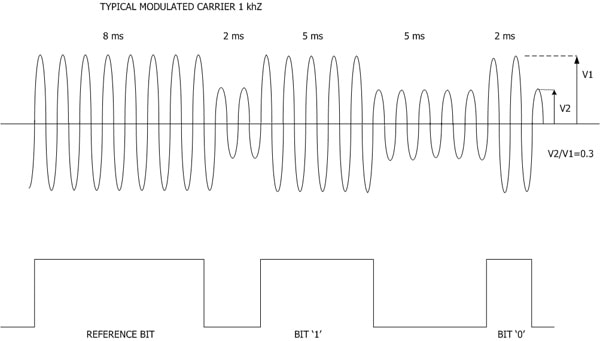

In the interview, Ben said, “… [the grad student] found the original specs for what that time code should contain. And, he found that it was supposed to have a carrier wave at one kilohertz. And for the whole thing you could actually see it warbling up and down and bobbing away as the speed changed on the tape.”

The carrier wave frequency was the breakthrough the team needed. By understanding the frequency drift, they could apply a process to correct for it, and then apply it to the other 29 tracks. After they completed this processing, each 16-hour tape only had a drift of about two seconds.

IRIG Timecode

This technique is used to describe the elapsed time into the year as “day-of-year – hour – minute – second,” using nine decimals and the 24-hour clock, for example “123-19-28-30.” It can build each of the nine decimal characters out of the bits shown above in under 1/10 of a second. The described time refers to the exact moment between two adjacent reference “bits.”

Summary

Ben applied new digital audio processing techniques to NASA’s audio archive. He restored Apollo 11, 13, and 17’s audio to modern standards. His timeline presentation visually places this audio where it happened during the missions. It takes a long time to process everything and then present it, but the results are worth it. If you would like to relive Apollo 13 to the fullest, go to apolloinrealtime.org.

The Saturn V rocket poised on the pad the night before launch.

Originally published on March 23, 2020.

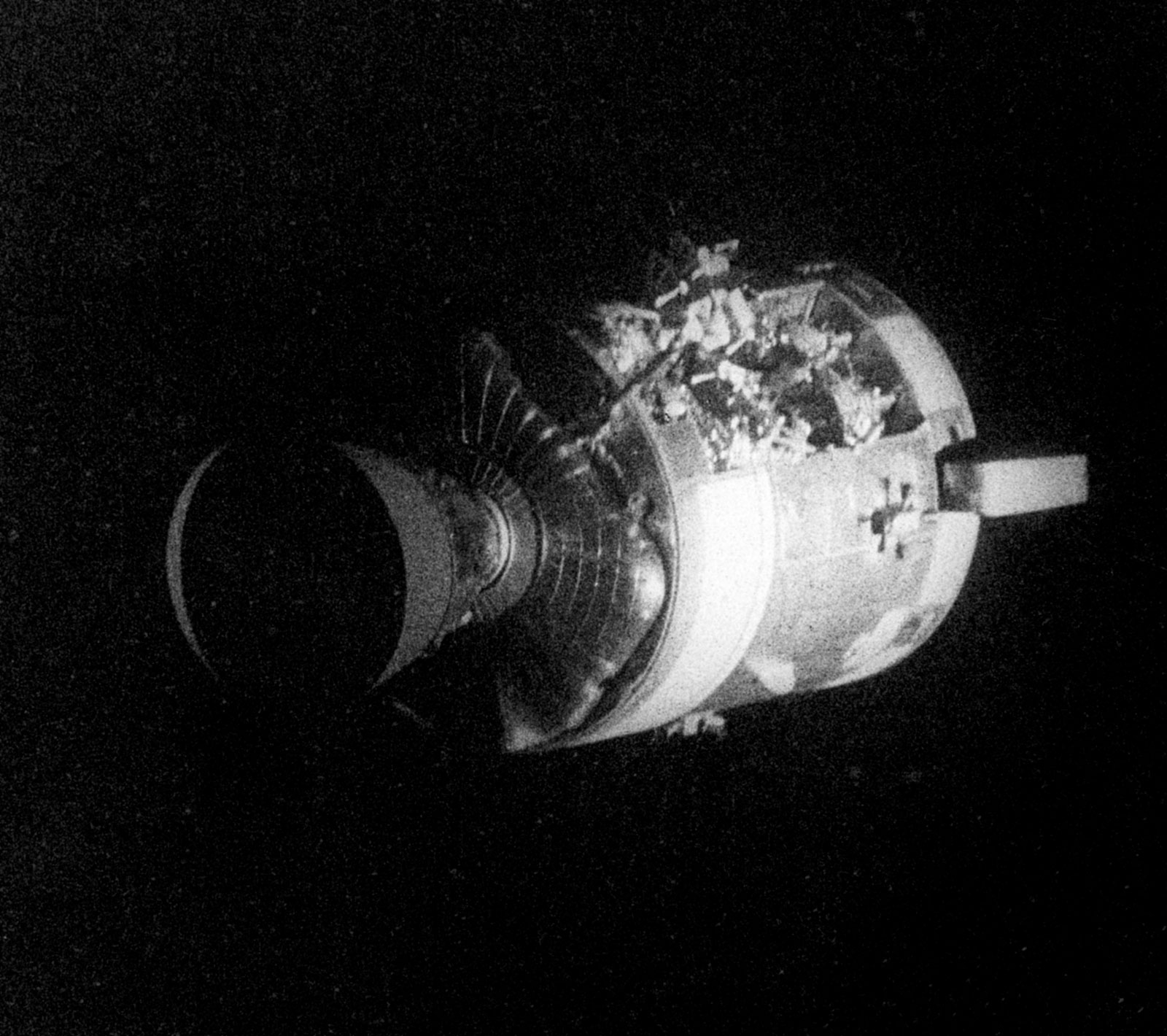

What’s the outline on the LEM’s payload area?

I don’t believe it’s an outline. The LEM payload fairing would split into 4 pedals and I think those “outlines” are the different pedals and the way they unfold. Here is an example of that system: https://www.sciencephoto.com/media/759063/view/apollo-docking-and-extraction-artwork